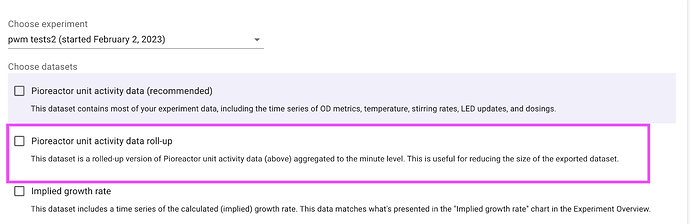

I was able to squeeze in a rollup table in the just-released 23.2.6 version. See notes here. After updating, you should see a new export “Pioreactor unity activity data rollup” available:

This averages the data per minute, so you should see about 10x less rows in this dataset. It can also be exported for previous experiments.

With this, I think you can safely go back to 5 sec / sample.

Let me know if you have any problems!